Microsoft Says Copilot Is for “Entertainment Only” in Terms of Use

Microsoft Clarifies Copilot Is “For Entertainment Only” in Terms of Use

Microsoft has come under scrutiny after users discovered that Copilot’s terms of use state the AI tool is intended for entertainment purposes only, despite being marketed as a powerful productivity assistant.

The clause, updated on October 24, 2025, quickly raised concerns among both everyday users and enterprise customers.

A Surprising Disclaimer

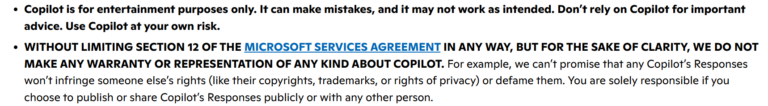

According to the terms:

- Copilot may produce incorrect results

- It may not function as expected at all times

- Users are advised not to rely on it for critical decisions

Most importantly, Microsoft emphasizes that all risks fall on the user.

This language appears to contradict the company’s push to integrate AI deeply into work environments.

Microsoft Responds to Backlash

Following criticism, Microsoft clarified that the wording is:

“legacy legal language”

The company explained that:

- The disclaimer comes from earlier development stages

- It does not reflect the current capabilities of Copilot

Microsoft has also confirmed plans to update the wording in future revisions.

Why AI Companies Use These Warnings

This type of disclaimer is not unique to Microsoft.

Other major AI companies like OpenAI and xAI include similar warnings, reminding users that:

- AI outputs are not guaranteed to be accurate

- Responses should not be treated as absolute truth

These statements are designed to reduce legal liability in case of incorrect or harmful outputs.

The Gap Between Marketing and Reality

The situation highlights a growing tension:

- 🚀 Marketing: AI as a reliable productivity tool

- ⚖️ Legal reality: AI as a system that can still fail

Even as AI becomes more advanced, companies continue to protect themselves by urging users to verify information independently.

What This Means for Users

While Copilot remains a powerful assistant, this serves as a reminder:

- Always double check AI generated information

- Avoid relying on it for high risk decisions

- Treat AI as a tool, not a final authority

A Wake Up Call for AI Trust

The controversy reinforces an important takeaway:

👉 AI is helpful, but not infallible.

Even if Microsoft updates the wording, the core message remains unchanged. Users must stay cautious and critical when using AI in real world scenarios.